Ethical Efficiencies

I've been writing a post on whether and why people should behave morally better after studying ethics. So far, I remain dissatisfied with my drafts. (The same is true of a general theoretical paper I am writing on the topic.) So this week I'll share a piece of my thinking on a related issue, which I'll call ethical efficiency.

Let's say that you aim -- as most people do -- to be morally mediocre. You aim, that is, not to be morally good (or non-bad) by absolute standards but rather to be about as morally good as your peers, neither especially better nor especially worse. Suppose also that you are in some respects ethically ignorant. You think that A, B, C, D, E, F, G, and H are morally good and that I, J, K, L, M, N, O, and P are morally bad, but in fact you're wrong 25% of the time: A, B, C, L, E, F, G, and P are good and the others are bad. (It might be better to do this exercise with a "morally neutral" category also, and 25% is a toy error rate that is probably too high -- but let's ignore such complications, since this is tricky enough as it is.)

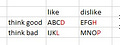

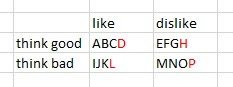

Finally, suppose that some of these acts you'd be inclined to do independently of their moral status: They're enjoyable, or they advance your interests. The others you'd prefer not to do, except to the extent that they are morally good and you want to do enough morally good things to hit the sweet zone of moral mediocrity. The acts you'd like to do are A, B, C, D, I, J, K, and L. This yields the following table, with the acts whose moral valence you're wrong about indicated in red:

Now, what acts will you choose to perform? Clearly A, B, C, and D, since you're inclined toward them and you think they are morally good (e.g., hugging your kids). And clearly not M, N, O, and P, since you're disinclined toward them and you think they are morally bad (e.g., stealing someone's bad sandwich). Acts E, F, G, and H are contrary to your inclinations but you figure you should do your share of them (e.g., serving on annoying committees, retrieving a piece of litter that the wind whipped out of your hand). Acts I, J, K, and L are tempting: You're inclined toward them but you see them as morally bad (e.g., taking an extra piece of cake when there isn't quite enough to go around). Suppose then that you choose to do E, F, I, and J in addition to A, B, C, and D: two good acts that you'd otherwise to disinclined to do (E and F) and two bad acts that you permit yourself to be tempted into (I and J).

Continuing our unrealistic idealizations, let's count up a prudential (that is, self-interested) score and moral score by giving each act a +1 or -1 in each category. Your prudential score will be +4 (+6 for A, B, C, D, I, and J, and -2 for E and F). Your own estimation of your moral score will also be +4 (+6 for A, B, C, D, E, and F, and -2 for I and J). This might be the mediocre sweet spot you're aiming for, short of the self-sacrificial saint (prudential 0, moral +8) but not as bad as the completely selfish person (prudential +8, moral 0). Looking around at your peers, maybe you judge them to be on average around +2 or +3, so a +4 lets you feel just slightly morally better than average.

Of course, in this model you've made some moral mistakes. Your actual moral score will only be +2 (+5 for A, B, C, E, and F, and -3 for D, I, and J). You're wrong about D. (Maybe D is complimenting someone in a way you think is kind but is actually objectionably sexist.) Thus, unsurprisingly, in moral ignorance we might overestimate our own morality. Aiming to be a little morally better than average might, on average, result in hitting the moral average, given moral ignorance.

Let's think of "ethical efficiency" as one's ability to squeeze the most moral juice from the least prudential sacrifice. If you're aiming for a moral score of +4, how can you do so with the least compromise to your prudential score? Your ignorance impairs you. You might think that by doing E and F and refraining from K and L, you're hitting +4, while also maintaining a prudential +4, but actually you've missed your moral target. You'd have done better to choose L instead of D -- an action as attractive as D but moral instead of (as you think) immoral (maybe you're a religious conservative and L is starting up a homosexual relationship with a friend who is attracted to you). Similarly, H would have been an inefficient choice: a prudential sacrifice for a moral loss instead of (as you think) a moral gain (e.g., fighting for a bad cause).

Perhaps this is schematic to the point of being silly. But I think the root idea makes sense. If you're aiming for some middling moral target rather than being governed by absolute standards, and if in the course of that aiming you are balancing prudential goods against moral goods, the more moral knowledge you have, the more effective you ought to be in efficiently trading off prudential goods for moral ones, getting the most moral bang for your buck. This is even clearer if we model the decisions with scalar values: If you know that E is +1.2 moral and -0.6 prudential then it would make sense to choose it over F which is +0.9 moral and -0.6 prudential. If you're ignorant about the relative morality of E and F you might just flip a coin, not realizing that E is the more efficient choice.

In some ways this resembles the consequentialist reasoning behind effective altruism, which explores how give resources to others in a way that most effectively benefits those others. However ethical efficiency is more general, since it encompasses all forms of moral tradeoff including free-riding vs. contributing one's share, lying vs. truth-telling, courageously taking a risk vs. playing it safe, and so on. Also, despite having mathematical features of the sort generally associated with the consequentialist's love of calculations, one needn't be a consequentialist to think this way. One could also reason in terms of tradeoffs in strengths and weaknesses of character (I'm lazy in this, but I make up for it by being courageous about that) or the efficient execution of deontological imperfect duties. Most of us do, I suspect, to some extent weigh up moral and prudiential tradeoffs, as suggested by the phenomena of moral self-licensing (feeling freer to do a bad thing after having done a good thing) and moral cleansing (feeling compelled to do something good after having done something bad).

If all of this is right, then one advantage of discovering moral truths is discovering more efficient ways to achieve your mediocre moral targets with the minimum of self-sacrifice. That is one, perhaps somewhat peculiar, reason to study ethics.