On Measuring People Twice

Lots of psychological studies involve measuring people twice. For example, in the imagery literature, there's a minor industry that seeks to relate self-reports about imagery to performance on cognitive tasks that seem to involve visual imagery, such visual memory tests or mental rotation tasks.

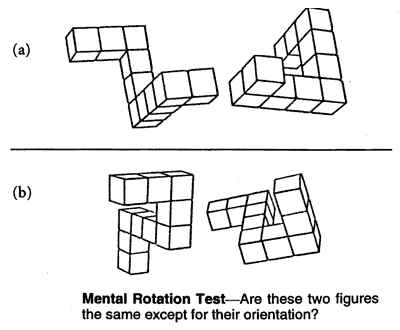

(A typical mental rotation task presents two line drawings of 3-D figures and asks if one is a simple rotation of the other, for example:

Image from http://www.skeptic.com here.)

Participants in such studies thus receive two tests, the cognitive test in question and also a self-report imagery test of some sort, such as the Vividness of Visual Imagery Questionnaire (VVIQ), which asks people to form various visual images and then rate their vividness. Correlations will often -- though by no means always -- be found. This will be taken to show that people with better (e.g. more vivid) imagery do in fact have more skill at the cognitive task in question.

This drives me nuts.

Reactivity between measures is, I think, a huge deal in such cases. Let me clarify by developing the imagery example a little farther.

Suppose you’re a participant in an experiment on mental imagery – an undergraduate, say, volunteering to participate in some studies to fulfill psychology course requirements. First, you’re given the VVIQ, that is, you’re asked how vivid your visual imagery is. Then, immediately afterward, you’re given a test of your visual memory – for example, a test of how many objects you can correctly recall after staring for a couple of minutes at a complex visual display. Now if I were in such an experiment and I had rated myself as an especially good visualizer when given the VVIQ, I might, when presented with the memory test, think something like this: “Damn! This experimenter is trying to see whether my imaging ability is really as good as I said it was! It’ll be embarrassing if I bomb. I’d better try especially hard.” Conversely, if I say I’m a poor visualizer, I might not put too much energy into the memory task, so as to confirm my self-report or what I take to be the experimenter’s hypothesis. Reactivity can work the other way, too, if the subjective report task is given second. Say I bomb the memory (or some other) task, then I’m given the VVIQ. I might be inclined to think of myself as a poor visualizer in part because I know I bombed the first task.

In general, participants are not passive innocents. Any time you give them two different tests, you should expect their knowledge of the first test to affect their performance on the second. Exactly how subjects will react to the second test in light of the first may be difficult to predict, but the probability of such reactivity should lead us to anticipate that, even if measures like the VVIQ utterly fail as measures of real, experienced imagery vividness, some researchers should find correlations between the VVIQ and performance on cognitive tasks. Therefore the fact that some researchers do find such correlations is no evidence at all of the reality of the posited relationship, unless there's a pattern in the correlations that could not just as easily be explained by reactivity.

In the particular case at hand, actually, I think the overall pattern of data positively suggests that reactivity is the main driving force behind the correlations. For example, to the extent there is a pattern in the relationship between the VVIQ and memory performance, the tendency is for the correlations to be higher in free recall tasks than in recognition tasks. Free recall tasks (like trying to list items in a remembered display) generally require more effort and energy from the subject than recognition tests (like “did you see this, yes or no?”) and so might be expected to show more reactivity between the measures.

The problem of reactivity between measures will plague any psychological subliterature in which participants are generally aware of being measured twice -- including much happiness research, almost any area of consciousness studies that seeks to relate self-reported experience and cognitive skills, the vast majority of longitudinal psychological studies, almost all studies on the effectiveness of psychotherapy or training programs, etc. Rarely, however, is it even given passing mention as a source of concern by people publishing in those areas.