If I use autocomplete to help me write my email, the email is -- we ordinarily think -- still written by me. If I ask ChatGPT to generate an essay on the role of fate in Macbeth, then the essay was not -- we ordinarily think -- written by me. What's the difference?

David Chalmers posed this question a couple of days ago at a conference on large language models (LLMs) here at UC Riverside.

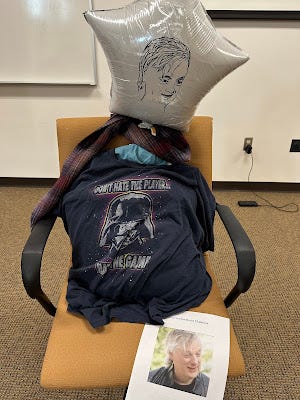

[Chalmers presented remotely, so Anna Strasser constructed this avatar of him. The t-shirt reads: "don't hate the player, hate the game"]

Chalmers entertained the possibility that the crucial difference is that there's understanding in the email case but a deficit of understanding in the Macbeth case. But I'm inclined to think this doesn't quite work. The student could study the ChatGPT output, compare it with Macbeth, and achieve full understanding of the ChatGPT output. It would still be ChatGPT's essay, not the student's. Or, as one audience member suggested (Dan Lloyd?), you could memorize and recite a love poem, meaning every word, but you still wouldn't be author of the poem.

I have a different idea that turns on segmentation and counterfactuals.

Let's assume that every speech or text output can be segmented into small portions of meaning, which are serially produced, one after the other. (This is oversimple in several ways, I admit.) In GPT, these are individual words (actually "tokens", which are either full words or word fragments). ChatGPT produces one word, then the next, then the next, then the next. After the whole output is created, the student makes an assessment: Is this a good essay on this topic, which I should pass off as my own?

In contrast, if you write an email message using autocomplete, each word precipitates a separate decision. Is this the word I want, or not? If you don't want the word, you reject it and write or choose another. Even if it turns out that you always choose the default autocomplete word, so that the entire email is autocomplete generated, it's not unreasonable, I think, to regard the email as something you wrote, as long as you separately endorsed every word as it arose.

I grant that intuitions might be unclear about the email case. To clarify, consider two versions:

Lazy Emailer. You let autocomplete suggest word 1. Without giving it much thought, you approve. Same for word 2, word 3, word 4. If autocomplete hadn't been turned on, you would have chosen different words. The words don't precisely reflect your voice or ideas, they just pass some minimal threshold of not being terrible.

Amazing Autocomplete. As you go to type word 1, autocomplete finishes exactly the word you intend. You were already thinking of word 2, and autocomplete suggests that as the next word, so you approve word 2, already anticipating word 3. As soon as you approve word 2, autocomplete gives you exactly the word 3 you were thinking of! And so on. In the end, although the whole email is written by autocomplete, it is exactly the email you would have written had autocomplete not been turned on.

I'm inclined to think that we should allow that in the Amazing Autocomplete case, you are author or author-enough of the email. They are your words, your responsibility, and you deserve the credit or discredit for them. Lazy Emailer is a fuzzier case. It depends on how lazy you are, how closely the words you approve match your thinking.

Maybe the crucial difference is that in Amazing Autocomplete, the email is exactly the same as what you would have written on your own? No, I don't think that can quite be the standard. If I'm writing an email and autocomplete suggests a great word I wouldn't otherwise have thought of, and I choose that word as expressing my thought even better than I would have expressed it without the assistance, I still count as having written the email. This is so, even if, after that word, the email proceeds very differently than it otherwise would have. (Maybe the word suggests a metaphor, and then I continue to use the metaphor in the remainder of the message.)

With these examples in mind, I propose the following criterion of authorship in the age of autocomplete: You are author to the extent that for each minimal token of meaning the following conditional statement is true: That token appears in the text because it captures your thought. If you had been having different thoughts, different tokens would have appeared in the text. The ChatGPT essay doesn't meet this standard: There is only blanket approval or disapproval at the end, not token-by-token approval. Amazing Autocomplete does meet the standard. Lazy Emailer is a hazy case, because the words are only roughly related to the emailer's thoughts.

Fans of Borges will know the story Pierre Menard, Author of the Quixote. Menard, imagined by Borges to be a 20th century author, makes it his goal to authentically write Don Quixote. Menard aims to match Cervantes' version word for word -- but not by copying Cervantes. Instead Menard wants to genuinely write the work as his own. Of course, for Menard, the work will have a very different meaning. Menard, unlike Cervantes, will be writing about the distant past, Menard will be full of ironies that Cervantes could not have appreciated, and so on. Menard is aiming at authorship by my proposed standard: He aims not to copy Cervantes but rather to put himself in a state of mind such that each word he writes he endorses as reflecting exactly what he, as a twentieth century author, wants to write in his fresh, ironic novel about the distant past.

On this view, could you write your essay about Macbeth in the GPT-3 playground, approving one individual word at a time? Yes, but only in the magnificently unlikely way that Menard could write the Quixote. You'd have to be sufficiently knowledgeable about Macbeth, and the GPT-3 output would have to be sufficiently in line with your pre-existing knowledge, that for each word, one at a time, you think, "yes, wow, that word effectively captures the thought I'm trying to express!"

I like the Pierre Menard/ChatGPT analogy! It also reminds me of somehow setting up a Leibnizian pre-established harmony between the output of your choices and those of ChatGPT. This suggests that if I managed to train up the appropriate Large Language Model that has the right sort of harmony with what I would say, we would count it’s outputs as mine, which seems reasonable.

There is something clumsy about the path to get there though. I rarely write anything word-by-word from the beginning. (Well, maybe emails and Substack comments, with only a few edits along the way.) So it’s a bit weird if the theory has to say that’s the way to evaluate something as mine or not. (Maybe this is an artifact of LLMs working token-by-token in order with no revision?)

But I want to go back to the idea you attribute to Dan Lloyd, which relates to thoughts I’ve had on watching the opening scene of the movie Her (where Joaquin Phoenix’s character is working at his job at ThoughtfulHandwrittenLetters.com) We tend to think there’s something problematic about getting Joaquin Phoenix to write the thoughtful letter to your boyfriend, rather than writing it yourself. But singing a Paul McCartney love song to your boyfriend probably is fine as a substitute for writing and singing your own song. (It’ll probably be a much better song!) And it might be even better to commission Paul McCartney to write a *new* love song for your boyfriend! So maybe the issue with not writing the letter (or the poem or whatever) is more about the social conventions, which could vary between media (letters rather than songs) and could change as social contexts change. It would take a lot for us to get to a point where having figured out a good prompt to get ChatGPT to write something “counts as well as” you writing it, but I don’t think it’s out of the question that we could.