Superhuman Moral Standing

three possible routes

Human beings matter morally. We have moral standing. Our interests deserve consideration -- for our own sakes, and not just as means to ends. Good ethical decision-making requires valuing human lives. Most philosophers hold that humans have the highest moral standing. No entity matters more, and many matter less. It’s worse to kill a human than a dog or a frog or bacteria or a tree.

That humans have the maximum possible moral standing is sometimes encoded in the philosophical jargon, for example when philosophers say that humans have “full moral status”. The moral gas-gauge tops out at “full” for us, so to speak.

But might some entities have higher moral standing than humans? Futurists envision the possibility of a post-human, transhuman, or superhuman future, or AI systems with superhuman capacities. Might there someday exist entities whose lives are intrinsically more valuable than ours, deserving moral priority over us, just as a human life deserves moral priority over that of a frog?

I see three possible paths to superhuman moral standing.

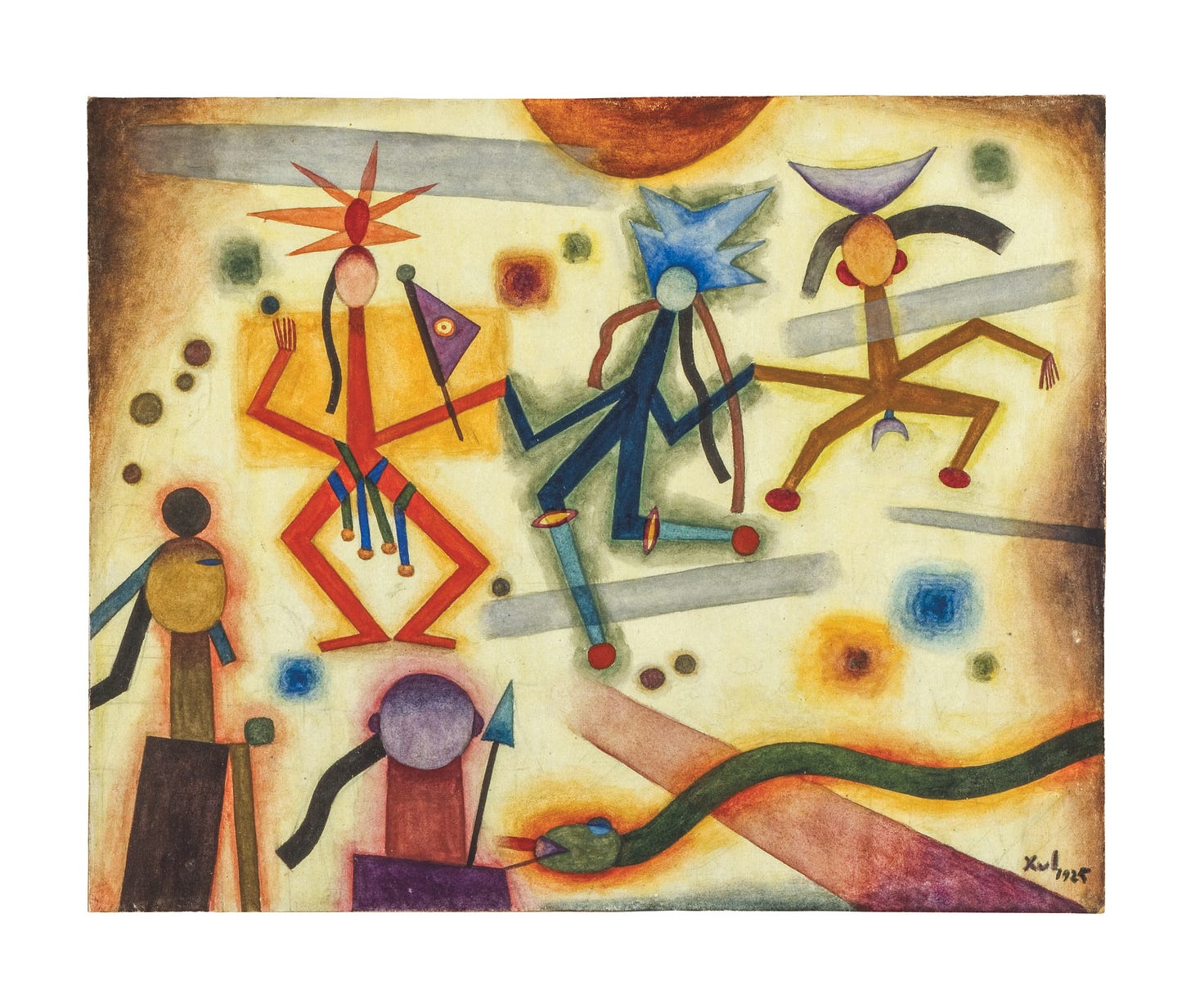

[Xul Solar, San Danza, source]

First Path: Quantitative Superhumanity

The seemingly most straightforward path to superhuman moral standing would involve having much more of something that we already regard as relevant to moral standing.

Classical utilitarians ground moral standing in the capacity for pleasure and pain. An entity deserves moral consideration to the extent we can increase or decrease its happiness. Humans (it’s assumed) experience more, or at least richer, pleasures and pains than other animals, hence human lives matter more. A utility monster or a superpleasure machine capable of vastly more happiness than an ordinary human might then deserve much greater weight in ethical decision making.

Rationality-based views, like Kant’s, ground moral standing in sophisticated rational capacities, such as our ability to think abstractly about our duties to one another. Maybe -- although this is not Kant’s view -- entities with some but less rational capacity, such as dogs, have significant but subhuman moral standing. Future entities with vastly superior rational capacities might correspondingly have superhuman moral standing.

A third type of view locates the distinctive value of humanity in our capacity to flourish in activities such as intimate friendship, productive work, creative play, and imaginative thought. Dogs also befriend and play, work and think, but perhaps not as richly and flourishingly as humans (though I can imagine disputing that). Possibly, some future entity could far surpass us in such capacities and deserve superhuman moral consideration on those grounds.

The big catch with quantitative approaches to superhumanity -- or maybe instead an appealing feature -- is that the utilitarian, rationalist, and perfectionist views I’ve just described should probably be articulated in egalitarian ways that impair the inference from more of X to higher moral standing. After all, we don’t normally say that mercurial people who feel more joy and suffering in everyday life deserve more moral consideration than those who keep an even keel. Nor do we say that “more rational” people deserve greater moral consideration, or that people who are more productive workers or more creative playmates do.

On all of these views, there’s plausibly a threshold of good enough, above which one has full moral status, fully equal with other humans. People with severe cognitive disabilities have full moral status either by being above that threshold or on more complicated grounds, such as belonging to humanity as a whole. If so, then hypothetical superhumans might also have moral standing only in virtue of exceeding that threshold, without its mattering how far above that threshold they are -- our equals in moral standing rather than our superiors.

To achieve superhuman moral standing despite egalitarianism among humans might then require either (1.) having so much more of the relevant X than an ordinary human as to trigger a genuine difference in kind; or (2.) having enough of X that, as a practical matter, the entity deserves greater weight even if its formal status is equal (as when utilitarians prioritize humans over mice because of their richer possible experiences, despite granting both equal standing in principle).

Second Path: Qualitative Superhumanity

A more radical possibility is that some beings might possess entirely new capacities that we can’t even conceive -- capacities that ground a higher kind of moral standing.

Just as a sea turtle could never understand cryptocurrency, we too are cognitively limited. Some features of the world might be forever beyond human comprehension. (Colin McGinn has suggested that how consciousness arises from matter is one example.) Maybe someday Earth will host entities whose cognitive capacities surpass ours as dramatically as ours surpass sea turtles. And maybe these entities will deserve a new type of higher moral consideration.

This isn’t just the quantitative thought that such entities might deserve more because they have more rationality or intelligence. The thought is that they might possess an unknown property Z -- something we entirely lack and cannot envision -- that elevates their standing beyond both sea turtles and humans.

For example, maybe sea turtles deserve some moral consideration because they can feel pleasure and pain. But maybe they don’t deserve fully humanlike moral consideration because they lack some other relevant capacity, such as the capacity to consider and adhere to ethical norms. They have some of X but none of Y, while we humans have both X and Y. The qualitative view posits a further Z, inaccessible to us, that grounds superhuman standing.

I can only present this possibility abstractly. But I’m not sure it’s in principle impossible. If moral standing depends on one thing only, such as pleasure or humanlike practical reasoning, then you can resist this move by insisting that only that one thing counts. But pluralists about the grounds of moral standing, who hold that it derives from more than one intrinsically good feature or capacity, have no clear reason to think that humans manifest the exhaustive list.

Third Path: Failures of Subject-Counting

I find egalitarianism attractive: one person, one point in the moral calculus, so to speak. But as I’ve argued elsewhere, future AI persons, if they ever come to exist, might defy the ordinary standards of individuation (e.g., here, here, here, here). They might overlap, merge, divide, back themselves up, and spin off partially or temporarily independent copies.

The norm of equality of persons would then require serious rethinking. There will be no clean count of AI persons to weigh against human persons. A “fission-fusion monster“ who can split into a hundred copies at will and later merge or partly merge back together raises difficult questions. Does the monster deserve equal consideration with one person, a hundred people, or some intermediate number? There might be no determinate answer. We’ll need new ethical principles for weighing competing interests. For some purposes we might treat the monster as equivalent to one person; for other purposes we might give it greater consideration. This could constitute a type of partly superhuman moral standing.

Alternatively, consider a massive entity, or a cluster of entities with many overlapping parts, whose total capacity and activity is comparable to several humans but who is neither wholly unified nor clearly individuatable into discrete humanlike subparts. We might just do our best with a rough count and give it equal consideration with that many ordinary humans. But another possibility would be to regard it not as approximately X humans but rather as a single, complex entity whose interests deserve significantly more weight than those of a single, ordinary human.

When animals exceed our power in various physical ways, we think we’re entitled to kill them if they are threats to humans (e.g., wolves in the American west, bacteria, disease-carrying mosquitoes) or confine them in fenced preserves, zoos, etc. (e.g., large African mammals). I don’t see why it would, or why it should, be any different with entities that exceeded our cognitive or sensitive capacities, if we could see that they were threats to human happiness. Imagine a mutation that turned cows into brilliant inventors and conspirators that we feared would allow them to stage an uprising that would amount to trading places in the food chain. Would we think we should morally refrain from removing the threat by extermination (or genetic modification)? I think not. If that’s right, then an obvious response to potential AI systems that we suppose might exceed us in cognitive abilities or conscious sensitivity would be that it’s immoral to bring them into existence, and moral to destroy them if they present a threat. And if the aliens land and we have good grounds for thinking they are a threat to humanity, it seems it would be immoral not to resist by every means possible.

Interesting argument. I am resistant to the framework itself. The imago dei tradition locates human dignity not in capacity but in a creative act, equal and non-negotiable. One cannot grade what is given. Ubuntu, Buddhism, and Daoism arrive at the same resistance from entirely different starting points. That convergence across traditions suggests the ranking framework itself may be the wrong move, however carefully constructed.