The Mimicry Argument Against Robot Consciousness

Suppose you encounter something that looks like a rattlesnake. One possible explanation is that it is a rattlesnake. Another is that it mimics a rattlesnake. Mimicry can arise through evolution (other snakes mimic rattlesnakes to discourage predators) or through human design (rubber rattlesnakes). Normally, it's reasonable to suppose that things are what they appear to be. But this default assumption can be defeated -- for example, if there's reason to suspect sufficiently frequent mimics.

Linguistic and "social" AI programs are designed to mimic superficial features that ordinarily function as signs of consciousness. These programs are, so to speak, consciousness mimics. This fact about them justifies skepticism about the programs' actual possession of consciousness despite the superficial features.

In biology, deceptive mimicry occurs when one species (the mimic) resembles another species (the model) in order to mislead another species such as a predator (the dupe). For example, viceroy butterflies evolved to visually resemble monarch butterflies in order to mislead predator species that avoid monarchs due to their toxicity. Gopher snakes evolved to shake their tails in dry brush in a way that resembles the look and sound of rattlesnakes.

Social mimicry occurs when one animal emits behavior that resembles the behavior of another animal for social advantage. For example, African grey parrots imitate each other to facilitate bonding and to signal in-group membership, and their imitation of human speech arguably functions to increase the care and attention of human caregivers.

In deceptive mimicry, the signal normally doesn't correspond with possession of the model's relevant trait. The viceroy is not toxic, and the gopher snake has no poisonous bite. In social mimicry, even if there's no deceptive purpose, the signal might or might not correspond with the trait suggested by the signal: The parrot might or might not belong to the group it is imitating, and Polly might or might not really "want a cracker".

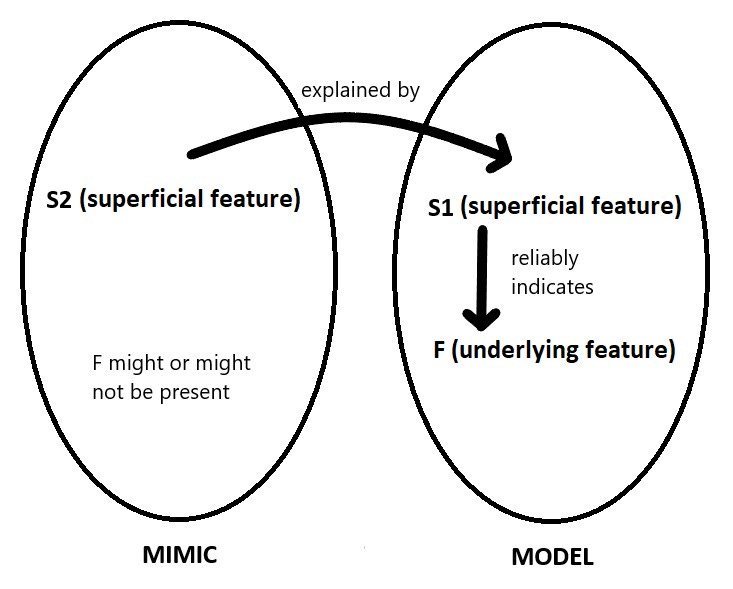

All mimicry thus involves three traits: the superficial trait (S2) of the mimic, the corresponding superficial trait (S1) of the model, and an underlying feature (F) of the model that is normally signaled by the presence of S1 in the model. (In the Polly-want-a-cracker case, things are more complicated, but let's assume that the human model is at least thinking about a cracker.) Normally, S2 in the mimic is explained by its having been modeled on S1 rather than by the presence of F in the mimic, even if F happens to be present in the mimic. Even if viceroy butterflies happen to be toxic to some predator species, their monarch-like coloration is better explained by their modeling on monarchs than as a signal of toxicity. Unless the parrot has been specifically trained to say "Polly want a cracker" only when it in fact wants a cracker, its utterance is better explained by modeling on the human than as a signal of desire.

Figure: The mimic's possession of superficial feature S2 is explained by mimicry of superficial feature S1 in the model. S1 reliably indicates F in the model, but S2 does not reliably indicate F in the mimic.

This general approach to mimicry can be adapted to superficial features normally associated with consciousness.

Consider a simple case, where S1 and S2 are emission of the sound "hello" and F is the intention to greet. The mimic is a child's toy that emits that sound when turned on, and the model is an ordinary English-speaking human. In an ordinary English-speaking human, emitting the sound "hello" normally (though of course not perfectly) indicates an intention to greet. However a child's toy has no intention to greet. (Maybe its designer, years ago, had an intention to craft a toy that would "greet" the user when powered on, but that's not the toy's intention.) F cannot be inferred from S2, and S2 is best explained by modeling on S1.

Large Language Models like GPT, PaLM, and LLaMA, are more complex but are structurally mimics.

Suppose you ask ChatGPT-4 "What is the capital of California?" and it responds "The capital of California is Sacramento." The relevant superficial feature, S2, is a text string correctly identifying the capital of California. The best explanation of why ChatGPT-4 exhibits S2 is that its outputs are modeled on human-produced text that also correctly identifies the capital of California as Sacramento. Human-produced text with that content reliably indicates the producer's knowledge that Sacramento is the capital of California. But we cannot infer corresponding knowledge when ChatGPT-4 is the producer. Maybe "beliefs" or "knowledge" can be attributed to sufficiently sophisticated language models, but that requires further argument. A much simpler model, trained on a small set of data containing a few instances of "The capital of California is Sacramento" might output the same text string for essentially similar reasons, without being describable as "knowing" this fact in any literal sense.

When a Large Language Model outputs a novel sentence not present in the training corpus, S2 and S1 will need to be described more abstractly (e.g., "a summary of Hamlet" or even just "text interpretable as a sensible answer to an absurd question"). But the underlying considerations are the same. The LLM's output is modeled on patterns in human-generated text and can be explained as mimicry of those patterns, leaving open the question of whether the LLM has the underlying features we would attribute to a human being who gave a similar answer to the same prompt. (See Bender et al. 2021 for an explicit comparison of LLMs and parrots.)

#

Let's call something a consciousness mimic if it exhibits superficial features best explained by having been modeled on the superficial features of a model system, where in the model system those superficial features reliably indicate consciousness. ChatGPT-4 and the "hello" toy are consciousness mimics in this sense. (People who say "hello" or answer questions about state capitals are normally conscious.) Given the mimicry, we cannot infer consciousness from the mimics' S2 features without substantial further argument. A consciousness mimic exhibits traits that superficially look like indicators of consciousness, but which are best explained by the modeling relation rather than by appeal to the entity's underlying consciousness. (Similarly, the viceroy's coloration pattern is best explained by its modeling on the monarch, not as a signal of its toxicity.)

"Social AI" programs, like Replika, combine the structure of Large Language Models with superficial signals of emotionality through an avatar with an expressive face. Although consciousness researchers are near consensus that ChatGPT-4 and Replika are not conscious to any meaningful degree, some ordinary users, especially those who have become attached to AI companions, have begun to wonder. And some consciousness researchers have speculated that genuinely conscious AI might be on the near (approximately ten-year) horizon (e.g., Chalmers 2023; Butlin et al. 2023; Long and Sebo 2023).

Other researchers -- especially those who regard biological features as crucial to consciousness -- doubt that AI consciousness will arrive anytime soon (e.g., Godfrey-Smith 2016; Seth 2021). It is therefore likely that we will enter an era in which it is reasonable to wonder whether some of our most advanced AI systems are conscious. Both consciousness experts and the ordinary public are likely to disagree, raising difficult questions about the ethical treatment of such systems (for some of my alarm calls about this, see Schwitzgebel 2023a, 2023b).

Many of these systems, like ChatGPT and Replika, will be consciousness mimics. They might or might not actually be conscious, depending on what theory of consciousness is correct. However, because of their status as mimics, we will not be licensed to infer that they are conscious from the fact that they have superficial features (S2-type features) that resemble features in humans (S1-type features) that, in humans, reliably indicate consciousness (underlying feature F).

In saying this, I take myself to be saying nothing novel or surprising. I'm simply articulating in a slightly more formal way what skeptics about AI consciousness say and will presumably continue to say. I'm not committing to the view that such systems would definitely not be conscious. My view is weaker, and probably acceptable even to most advocates of near-future AI consciousness. One cannot infer the consciousness of an AI system that is built on principles of mimicry from the fact that it possesses features that normally indicate consciousness in humans. Some extra argument is required.

However, any such extra argument is likely to be uncompelling. Given the highly uncertain status of consciousness science, and widespread justifiable dissensus, any positive argument for these systems' consciousness will almost inevitably be grounded in dubious assumptions about the correct theory of consciousness (Schwitzgebel 2014, 2024).

Furthermore, given the superficial features, it might feel very natural to attribute consciousness to such entities, especially among non-experts unfamiliar with their architecture and perhaps open to, or even enthusiastic about, the possibility of AI consciousness in the near future.

The mimicry of superficial features of consciousness isn't proof of the nonexistence of consciousness in the mimic, but it is grounds for doubt. And in the context of highly uncertain consciousness science, it will be difficult to justify setting aside such doubts.

None of these remarks would apply, of course, to AI systems that somehow acquire features suggestive of consciousness by some process other than mimicry.