The Phi Value of Integrated Information Theory Might Not Be Stable Across Small Changes in Neural Connectivity

In learning and in forgetting, the amount of connectivity between your neurons changes. Throughout your life, neurons die and grow. Through all of this, the total amount of conscious experience you have, at least in your alert, attentive moments, seems to stay roughly the same. You don't lose a few neural connections and with it 80% of your consciousness. The richness of our stream of experience is stable across small variations in the connectivity of our neurons -- or so, at least, it is plausible to think.

One of the best known theories of consciousness, Integrated Information Theory, purports to model how much consciousness a neural system has by means of a value, Φ (phi), that is a mathematically complicated measure of how much "integrated information" a system possesses. The higher the Φ, the richer the conscious experience, the lower the Φ, the thinner the experience. Integrated Information Theory is subject to some worrying objections (and here's an objection by me, which I invite you also to regard as worrying). Today I want to highlight a different concern than these: the apparent failure of Φ to be robust to small changes in connectivity.

The Φ of any particular informational network is difficult to calculate, but the IIT website provides a useful tool. You can play around with networked systems of about 4, 5, or 6 nodes (above 6, the computation time to calculate Φ becomes excessive). Prefab systems are available to download, with Φ values from less than 1 to over 15. It's fun!

But there are two things you might notice, once you play around with the tool for a while:

First, it's somewhat hard to create systems with Φ values much above 1. Slap 5 nodes together and connect them any which way, and you're likely to get a Φ value between 0 and 1.

Second, if you tweak the connections of the relatively high-Φ systems, even just a little, or if you change a logical operator from one operation to another (e.g., XOR to AND), you're likely to cut the Φ value by at least half. In other words, the Φ value of these systems is not robust across small changes.

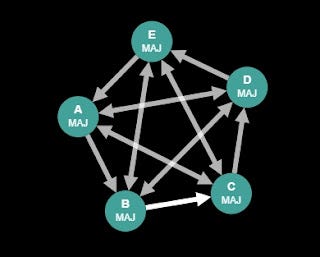

To explore the second point more systematically, I downloaded the "IIT 3.0 Paper Fig. 17 Specialized majority" network which, when all 5 nodes are lit, has a Φ value of 10.7. (A node's being "lit" means it has a starting value of "on" rather than "off".) I then tweaked the network in every way that it was possible to tweak it by changing exactly one feature. (Due to the symmetry of the network, this was less laborious than it sounds.) Turning off any one node reduces Φ to 2.2. Deleting any one node reduces Φ to 1. Deleting one connection, altering its direction (if unidirectional), or changing it from unidirectional to bidirectional or vice versa, always reduces system's Φ to a value ranging from 2.6 to 4.8. Changing the logic function of one node has effects that are sometimes minor and sometimes large: Changing any one node from MAJ to NOR reduces Φ all the way down to 0.4, while changing any one node to MIN increases Φ to 13.0. Overall, most ways of introducing one minimal perturbation into the system reduce Φ by at least half, and some reduce it by over 90%.

To confirm that the "Specialized majority" network was not unusual in this respect, I attempted a similar systematic one-feature tweaking of "CA Paper Fig 3d, Rule 90, 5 nodes". The 5-node Rule 90 network, with all nodes in the default unlit configuration, has a Φ of 15.2. The results of perturbation are similar to the results for the "Specialized majority" network. Light any one node of the rule 90 network and Φ falls to 1.8. Delete any one arrow and Φ also falls to 1.8. Change any one arrow from bidirectional to unidirectional and Φ falls to 4.8. Change the logic of one node and Φ ranges anywhere from a low of 1.8 (RAND, PAR, and >2) to a high of 19.2 (OR).

These two examples, plus what I've seen in my unsystematic tweaking of other prefab networks, plus my observations about the difficulty of casually constructing a five-node system with Φ much over 1, suggest that, in five-node systems at least, having a high Φ value requires highly specific structures that are unstable to minor perturbations. Small tweaks can easily reduce Φ by half or more.

It would be bad for Integrated Information Theory, as a theory of consciousness, if this high degree of instability in systems with high Φ values scales up to large systems, like the brain. The loss of a few neural connections shouldn't make a human being's Φ value crash down by half or more. Our brains are more robust than that. And yet I'm not sure that we should be confident that the mathematics of Φ has the requisite stability in large, high-Φ systems. In the small networks we can measure, at least, it is highly unstable.

ETA November 10:

Several people have suggested to me that Phi will be more stable to small perturbations as the size of the network increases. I could see how that might be the case (which is why I phrased the concluding paragraph as a worry rather than as an positive claim). Now if Phi, like entropy, were dependent in some straightforward way on the small contributions of many elements, that would be likely to be so. But the mathematics of Phi relies heavily on discontinuities and threshold concepts. I exploit this fact in my earlier critique of the Exclusion Postulate, in which I show that a very small change in the environment of a system, without any change interior to the system, could cause that system to instantly fall from arbitrarily high Phi to zero.

If anyone knows of a rigorous, rather than handwavy attempt to show that Phi in large systems is stable over minor perturbations, I would be grateful if you pointed it out!