What's the Likelihood That Your Mind Is Constituted by a Rabbit Reading and Writing on Long Strips of Turing Tape?

Your first guess is probably not very likely.

But consider this argument:

(1) A computationalist-functionalist philosophy of mind is correct. That is, mentality is just a matter of transitioning between computationally definable states in response to environmental inputs, in a way that hypothetically could be implemented by a computer.

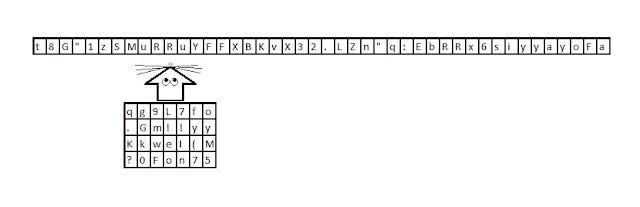

(2) As Alan Turing famously showed, it's possible to implement any finitely computable function on a strip of tape containing alphanumeric characters, given a read-write head that implements simple rules for writing and erasing characters and moving itself back and forth along the tape.

(3) Given 1 and 2, one way to implement a mind is by means of a rabbit reading and writing characters on a long strip of tape that is properly responsive, in an organized way, to its environment. (The rabbit will need to adhere to simple rules and may need to live a very long time, so it won't be exactly a normal rabbit. Environmental influence could be implemented by alteration of the characters on segments of the tape.)

(4) The universe is infinite.

(5) Given 3 and 4, the cardinality of "normally" implemented minds is the same as the cardinality of minds implemented by rabbits reading and writing on Turing tape. (Given that such Turing-rabbit minds are finitely probable, we can create a one-to-one mapping or bijection between Turing-rabbit minds and normally implemented minds, for example by starting at an arbitrary point in space and then pairing the closest normal mind with the closest Turing-rabbit mind, then pairing the second-closest of each, then pairing the third-closest....)

(6) Given 5, you cannot justifiably assume that most minds in the universe are "normal" minds rather than Turing-rabbit implemented minds. (This might seem unintuitive, but comparing infinities often yields such unintuitive results. ETA: One way out of this would be to look at the ratios in limits of sequences. But then we need to figure out a non-question-begging way to construct those sequences. See the helpful comments by Eric Steinhart on my public FB feed.)

(7) Given 6, you cannot justifiably assume that you yourself are very likely to be a normal mind rather than a Turing-rabbit mind. (If 1-3 are true, Turing-rabbit minds can be perfectly similar to normally implemented minds.)

I explore this possibility in "THE TURING MACHINES OF BABEL", a story in this month's issue of Apex Magazine. I'll link to the story once it's available online, but also consider supporting Apex by purchasing the issue now.

The conclusion is of course "crazy" in my technical sense of the term: It's highly contrary to common sense and we aren't epistemically compelled to believe it.

Among the ways out: You could reject the computational-functional theory of mind, or you could reject the infinitude of the universe (though these are both fairly common positions in philosophy and cosmology these days). Or you could reject my hypothesized rabbit implementation (maybe slowness is a problem even with perfect computational similarity). Or you could hold a view which allows a low ratio of Turing rabbits to normal minds despite the infinitude of both. Or you could insist that we (?) normally implemented minds have some epistemic access to our normality even if Turing-rabbit minds are perfectly similar and no less abundant. But none of those moves is entirely cost-free, philosophically.

Notice that this argument, though skeptical in a way, does not include any prima facie highly unlikely claims among its premises (such as that aliens envatted your brain last night or that there is a demon bent upon deceiving you). The premises are contentious, and there are various ways to resist my combination of them to draw the conclusion, but I hope that each element and move, considered individually, is broadly plausible on a fairly standard 21st-century academic worldview.

The basic idea is this: If minds can be implemented in strange ways, and if the universe is infinite, then there will be infinitely many strangely implemented minds alongside infinitely many "normally" implemented minds; and given standard rules for comparing infinities, it seems likely that these infinities will be of the same cardinality. In an infinite universe that contains infinitely many strangely implemented minds, it's unclear how you could know you are not among the strange ones.